Orchestrating Multi-Agent Systems: A Deep Dive into LangGraph vs. CrewAI

AI Overview

For technical leadership, the choice between LangGraph and CrewAI is a choice between control and velocity. CrewAI excels in role-based, linear, or hierarchical task execution, making it ideal for content workflows and research. LangGraph provides a low-level, graph-based framework designed for complex, cyclical reasoning and state-persistent applications. For enterprise-grade agentic AI systems, LangGraph is the standard for custom logic, while CrewAI is the accelerator for standardized operations.

The Architecture of Agentic Intelligence

Orchestration is the difference between a collection of prompts and a functioning digital workforce. As enterprises move beyond simple RAG (Retrieval-Augmented Generation) to autonomous agents, the underlying framework dictates the system’s limits.

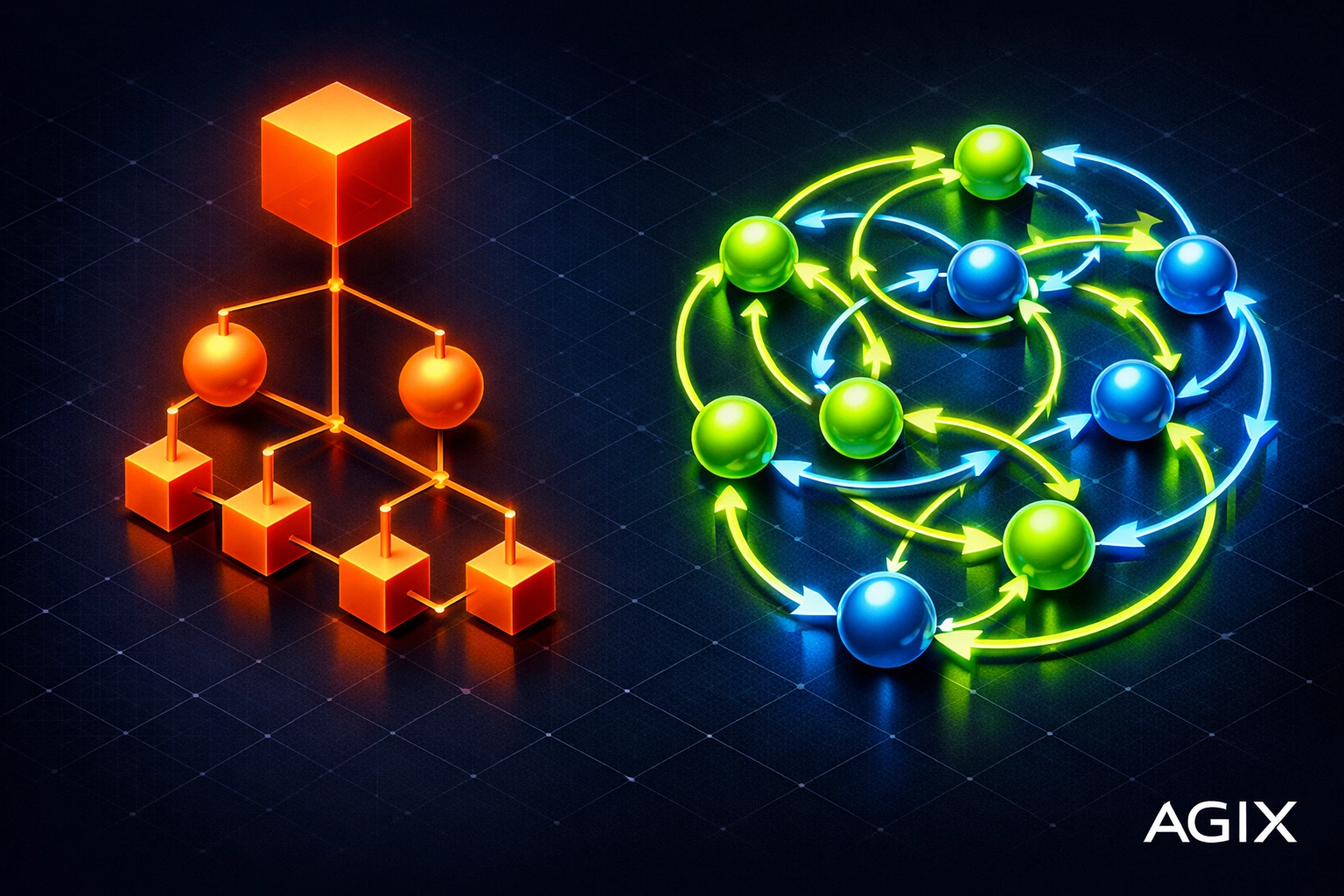

Currently, the market is split between two primary philosophies:

- Role-Based Orchestration (CrewAI): Modeling agents after human organizational structures (Manager, Researcher, Writer).

- State-Management Loops (LangGraph): Modeling agents as state machines using directed acyclic (or cyclic) graphs.

At Agix Technologies, we’ve deployed both. The “right” choice depends entirely on the complexity of your decision-making loops and the necessity of state persistence.

CrewAI: High-Level Abstraction for Rapid Deployment

CrewAI is built on top of LangChain but abstracts the complexity of agent interaction into a “Crew” metaphor. It is highly effective for processes that follow a predictable, hierarchical path.

- The Philosophy: You define agents with specific “roles,” “backstories,” and “goals.” You then assign them “tasks” and group them into a “Crew.”

- The Workflow: Usually sequential or hierarchical. A manager agent oversees the process, delegating tasks and reviewing outputs before proceeding.

- Best Use Case: Marketing automation, standardized financial reporting, and competitive research.

Technical Pros:

- Developer Velocity: You can stand up a functioning multi-agent system in hours.

- Built-in Process Patterns: Handles delegation and inter-agent communication out of the box.

- Native Tooling: Deep integration with a variety of search and data scraping tools.

Technical Cons:

- Limited Recurrence: Struggling with complex loops where an agent must go back three steps based on a specific data validation.

- Opacity: The “black box” nature of the manager agent can make debugging specific logic failures difficult in production environments.

LangGraph: The Engineering Standard for Complex Logic

LangGraph treats agentic workflows as a graph. Each node is a function (or an agent), and each edge is a transition. Unlike standard chains, LangGraph allows for cycles. This is critical for systems that require iterative refinement.

- The Philosophy: Everything is state. The “StateGraph” object is passed between nodes, updated, and governed by strict conditional edges.

- The Workflow: Highly customized. You can define exact conditions under which an agent should retry a task, seek human intervention, or exit the loop.

- Best Use Case: Complex AI predictive analytics, multi-step legal discovery, and autonomous coding agents.

Comparison Chart: LangGraph State Management vs CrewAI Role Delegation]

Comparison Chart: LangGraph State Management vs CrewAI Role Delegation]

Technical Pros:

- Granular Control: Total visibility into every transition. Perfect for AI systems engineering.

- Persistence & Time Travel: Built-in “checkpointer” allows the system to save state. If a system crashes, it resumes from the last node. It even allows “time travel” to modify past state and re-run.

- Human-in-the-loop (HITL): First-class support for interrupting the graph to wait for human approval before continuing.

Technical Cons:

- Steep Learning Curve: Requires a deep understanding of graph theory and state management.

- Boilerplate: More code is required to define the basic structure compared to CrewAI.

Comparison: Framework Capabilities for Enterprise Scaling

| Feature | CrewAI | LangGraph |

|---|---|---|

| Orchestration Style | Role-based / Task-based | State Machine / Graph-based |

| Logic Flow | Sequential, Hierarchical | Cyclical, Branching, Custom |

| State Management | Contextual memory (simpler) | Robust checkpointing (Enterprise-grade) |

| Debugging | Standard logs / Tracing | Time-travel debugging / Node-level tracing |

| Human-in-the-Loop | Manual validation prompts | Native breakpoints and state editing |

| Scalability | Moderate (best for <10 agents) | High (supports complex distributed graphs) |

Implementation Realities: Which One Should You Choose?

Choose CrewAI if:

You are building an internal tool for operations or marketing. If your process looks like a standard SOP (Standard Operating Procedure), where Task A leads to Task B, CrewAI’s role-based abstraction will save you weeks of development time. It is the go-to for conversational AI chatbots that need to tap into specialized knowledge bases.

Choose LangGraph if:

You are building a core product feature that requires 99.9% reliability and complex reasoning. If your agents need to self-correct, browse the web, find an error, go back to a previous step, and try a different tool, LangGraph is the only viable option. For high-stakes environments like legal AI, the ability to audit the state at every node is non-negotiable.

Integration with Agix Technologies Ecosystem

At Agix, we don’t just build agents; we engineer Agentic Intelligence. Whether we are optimizing real estate data pipelines for Properti AI or managing massive datasets for HouseCanary, the framework selection is our first strategic pivot.

For most of our AI automation clients, we implement a hybrid approach. We use LangGraph to manage the overarching “State of the Business” and deploy specialized CrewAI “sub-teams” to handle specific, linear sub-tasks like report generation or data cleaning.

LLM Access Paths: Deploying Your Multi-Agent System

Implementing these frameworks requires a robust LLM backbone. Here is how you access the intelligence required to power these orchestrators:

- Cloud APIs (GPT-4o, Claude 3.5 Sonnet): The easiest path. Both frameworks support LangChain/LiteLLM, making it trivial to swap models. Claude 3.5 Sonnet is currently our preferred model for LangGraph due to its superior reasoning and “computer use” capabilities.

- Local Deployment (Ollama, vLLM): For companies with strict data privacy requirements. We often deploy CrewAI locally using Llama 3 for data processing tasks that never leave the internal network.

- Managed Platforms (LangGraph Cloud): For scaling LangGraph, the dedicated cloud environment provides superior monitoring and persistence management.

10 Mistakes You’re Making with Multi-Agent Orchestration (and How to Fix Them)

- Over-complicating Simple Tasks: Don’t use a 5-agent Crew for a task that a single well-engineered prompt can handle.

- Ignoring State Persistence: Failing to save the agent’s state means that if the API calls fail at step 9, you lose steps 1-8. Fix: Use LangGraph’s checkpointers.

- Vague Backstories in CrewAI: “You are a helpful assistant” is not a role. Fix: Give specific constraints and personas to improve output quality.

- No Human-in-the-Loop (HITL): Letting agents spend $50 in API credits in a loop without oversight. Fix: Implement breakpoints at critical decision nodes.

- Linear Thinking in a Non-Linear World: Assuming your business process never loops back. Fix: Map your workflow as a graph before writing code.

- Hard-coding Transitions: Making the graph too rigid. Fix: Use conditional edges based on LLM output to allow the system to “decide” the next step.

- Neglecting Latency: Multi-agent systems are slow. Fix: Use asynchronous execution and streaming for a better user experience.

- Tool Overload: Giving an agent 50 tools confuses the reasoning. Fix: Create specialized agents with 3-5 tools each.

- Lack of Observability: Not knowing why an agent failed. Fix: Use LangSmith to trace every call.

- Scaling Without Strategy: Building “cool” agents that don’t solve an ROI-positive problem. Fix: Start with our Ultimate Guide to Enterprise AI Scaling.

Frequently Asked Questions

Ready to Implement These Strategies?

Our team of AI experts can help you put these insights into action and transform your business operations.

Schedule a Consultation