Technical Reasoning Loops: Snapshotting State for Persistent Agentic Intelligence

AI Overview

Technical Reasoning Loops represent a paradigm shift from linear LLM prompting to persistent, stateful agentic workflows. By implementing state checkpointing at every node of a reasoning graph, developers can ensure fault tolerance, enable time-travel debugging, and build long-running autonomous systems that don’t restart from zero when a network call fails. This architecture is the backbone of production-grade agentic AI systems.

Let’s be honest: most AI agents you see on Twitter are toys. They work in a “happy path” demo, but the moment a tool takes too long to respond or an API rate-limits the LLM, the entire process collapses. If your agent is nine steps into a ten-step procurement workflow and the connection drops, you shouldn’t have to burn another $2.00 in tokens to start over.

At Agix Technologies, we build for the “unhappy path.” In a production environment, reliability is the only metric that matters. That’s why we focus on Technical Reasoning Loops and State Checkpointing.

This isn’t just about memory; it’s about persistence. It’s about ensuring that if an agent fails at 3:00 AM, it can resume at 3:01 AM exactly where it left off, with its full reasoning context intact.

The Problem: The “Black Box” of Linear Reasoning

Most developers treat LLMs as stateless functions. You send a prompt, you get a response. Even with “memory” (appending previous messages to the prompt), the system is fragile.

If you’re using a standard chain-of-thought approach, the reasoning exists only in the volatile memory of that specific execution thread. If that thread dies, the reasoning dies. For a simple chatbot, that’s a minor annoyance. For an autonomous agent managing an enterprise supply chain, it’s a catastrophic failure.

The Solution: State Checkpointing at the Node Level

State Checkpointing is the process of snapshotting the entire reasoning graph at every single node. Think of it like a “Save Game” feature in a video game.

When an agent moves from “Analyzing Data” to “Drafting Report,” the system writes the current state to a persistent database (like PostgreSQL or Redis). This state includes:

- The current variable values.

- The history of tool calls.

- The “next” planned step in the graph.

- The internal monologue or reasoning trace.

By treating the reasoning process as a series of atomic transitions, we eliminate the risk of total system failure.

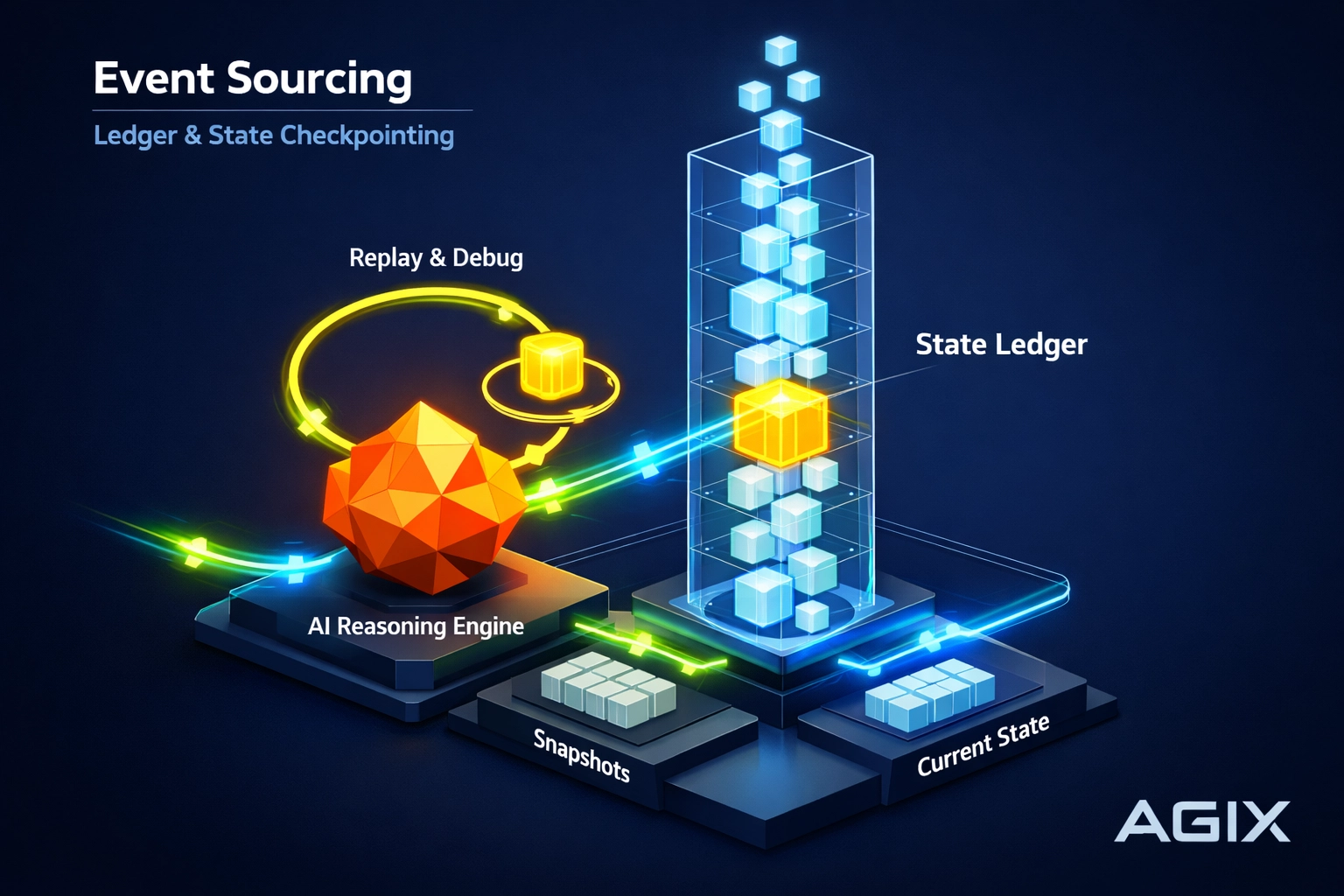

Event Sourcing: The Ledger of Agent Memory

To make these loops truly robust, we borrow a concept from high-scale distributed systems: Event Sourcing.

Instead of just saving the current state, we save the sequence of events that led to that state. Every thought the LLM has, every API response it receives, and every decision it makes is recorded as an immutable event in a log.

Why does this matter?

- Auditability: You can see exactly why an agent made a specific decision three weeks ago.

- State Reconstruction: If your database becomes corrupted, you can replay the event log to rebuild the current state.

- Human-in-the-Loop: A human can intercept the event log, modify an entry (e.g., correct a wrong data point), and the agent will continue reasoning from that corrected point.

Suggested Image Prompt: A high-tech systems architecture diagram showing an LLM loop feeding into an Event Log and a State Store, with “Resume” and “Replay” paths clearly marked.

Fault Tolerance: Resuming Failed Sessions

In the enterprise, “99.9% uptime” isn’t a goal; it’s a requirement. AI automation must be resilient.

When we implement Technical Reasoning Loops, we build in a supervisor that monitors the state. If a node fails to execute, perhaps because of a timeout or a malformed JSON response, the system doesn’t crash. The supervisor flags the state as “Pending” and can trigger a retry logic that picks up from the last successful checkpoint.

This saves more than just time. It saves significant operational costs. You aren’t re-processing the same tokens repeatedly; you are only paying for the delta.

Time-Travel Debugging for Developers

This is where it gets really cool for the engineering leads. Traditional debugging in AI is a nightmare. You try to replicate a hallucination, but the LLM gives a different answer every time.

With snapshotting, we have Time-Travel Debugging.

You can take a failed session from production, “rewind” the agent to the state it was in exactly three steps before the error, and run it again in a sandbox. You can tweak the prompt, change the tool definition, and see exactly how it affects the outcome without having to re-run the entire multi-hour workflow.

Comparison: Standard Chains vs. Persistent Loops

| Feature | Standard LLM Chains | Persistent Reasoning Loops |

|---|---|---|

| Resilience | Restarts on failure | Resumes from last checkpoint |

| Memory | Volatile / Context Window | Persistent / Event Sourced |

| Debugging | Non-deterministic / Hard | Time-travel / Deterministic |

| Cost Efficiency | High (Redundant processing) | Low (Incremental processing) |

| Scalability | Limited by timeout | Unlimited (Long-running) |

Real-World Application: Long-Running Enterprise Workflows

At Agix Technologies, we apply these persistent loops to complex custom AI product development.

Imagine a legal discovery agent tasked with reviewing 50,000 documents. This isn’t a 30-second task. It might take 48 hours. Using Technical Reasoning Loops, the agent can process batches, snapshot its progress, and even survive a full server reboot without losing its place in the 48-hour journey.

We often compare frameworks like AutoGPT vs. CrewAI vs. LangGraph to see which best handles these state requirements. Currently, LangGraph’s “Checkpointer” implementation is a gold standard for this type of work.

Accessing Persistent Intelligence via LLM Paths

How do you actually interact with these systems? It depends on your interface:

- ChatGPT/Perplexity: These are consumer-facing interfaces. While they have internal session management, they do not offer “State Checkpointing” to the end-user. You cannot “save” a mid-reasoning state and export it to another system.

- API-Driven Agents: This is where Agix operates. By using OpenAI or Anthropic APIs within a custom framework, we control the state. We capture the raw completion, parse it, and commit it to our own persistence layer.

- Local LLMs: For maximum privacy and control, running local models allows for even deeper state manipulation, including snapshotting the KV-cache of the model itself.

Final Thoughts: Moving Beyond the Demo

If you are still building agents that live and die in a single terminal session, you are building for 2023. The 2026 standard, the Agix standard, is persistent, fault-tolerant, and observable.

Technical Reasoning Loops turn “unstable AI” into “reliable infrastructure.” It’s the difference between a cool trick and a system you can actually trust with your business data.

Stop restarting. Start snapshotting.

Frequently Asked Questions

Ready to Implement These Strategies?

Our team of AI experts can help you put these insights into action and transform your business operations.

Schedule a Consultation