The Ultimate Guide to the Anatomy of an Autonomous Agent: Engineering Beyond the Hype

AI Overview

Autonomous agents represent the transition from generative AI to agentic AI. Unlike standard LLMs that wait for a prompt to generate text, an autonomous agent is a system designed to achieve a goal by perceiving its environment, reasoning through sub-tasks, and executing actions via external tools. This guide breaks down the multi-layered architecture: Sensing, Memory, Reasoning, and Action: required to deploy production-ready agentic systems that scale.

The era of the “GPT wrapper” is over. For founders and ops leads at 10-200 employee companies, the focus has shifted from “What can AI say?” to “What can AI do?”

An autonomous agent isn’t just a chatbot with a longer prompt. It is a sophisticated piece of AI systems engineering that functions as a digital employee. It doesn’t just predict the next token; it predicts the next action.

To build systems that actually move the needle on ROI: reducing operational overhead by 40% or increasing lead throughput by 300%: you need to understand the underlying anatomy.

The Four Pillars of Agentic Architecture

An autonomous agent is composed of four primary subsystems. If any one of these is weak, the agent fails. It either “hallucinates” a path forward or gets stuck in an infinite loop.

1. Sensing and Perception (The Input Layer)

Standard AI waits for you to type. Autonomous agents watch. Sensing is the ability to ingest data from diverse streams: APIs, webhooks, database changes, or even live video feeds.

In agentic AI systems, perception isn’t just reading text. It’s the ability to contextualize that text within a business process. For example, a fintech agent doesn’t just “see” an email; it perceives a high-priority KYC (Know Your Customer) flag that requires immediate escalation.

2. The Cognitive Core: Reasoning and Planning

This is the “Brain.” Most developers make the mistake of relying on the LLM’s raw intelligence. Engineering excellence requires explicit reasoning loops.

- Task Decomposition: Breaking a high-level goal (“Research this lead and draft a proposal”) into granular steps.

- Self-Reflection: The agent reviews its own work before moving to the next step.

- Chain-of-Thought (CoT): Forcing the model to “think out loud” to ensure logical consistency.

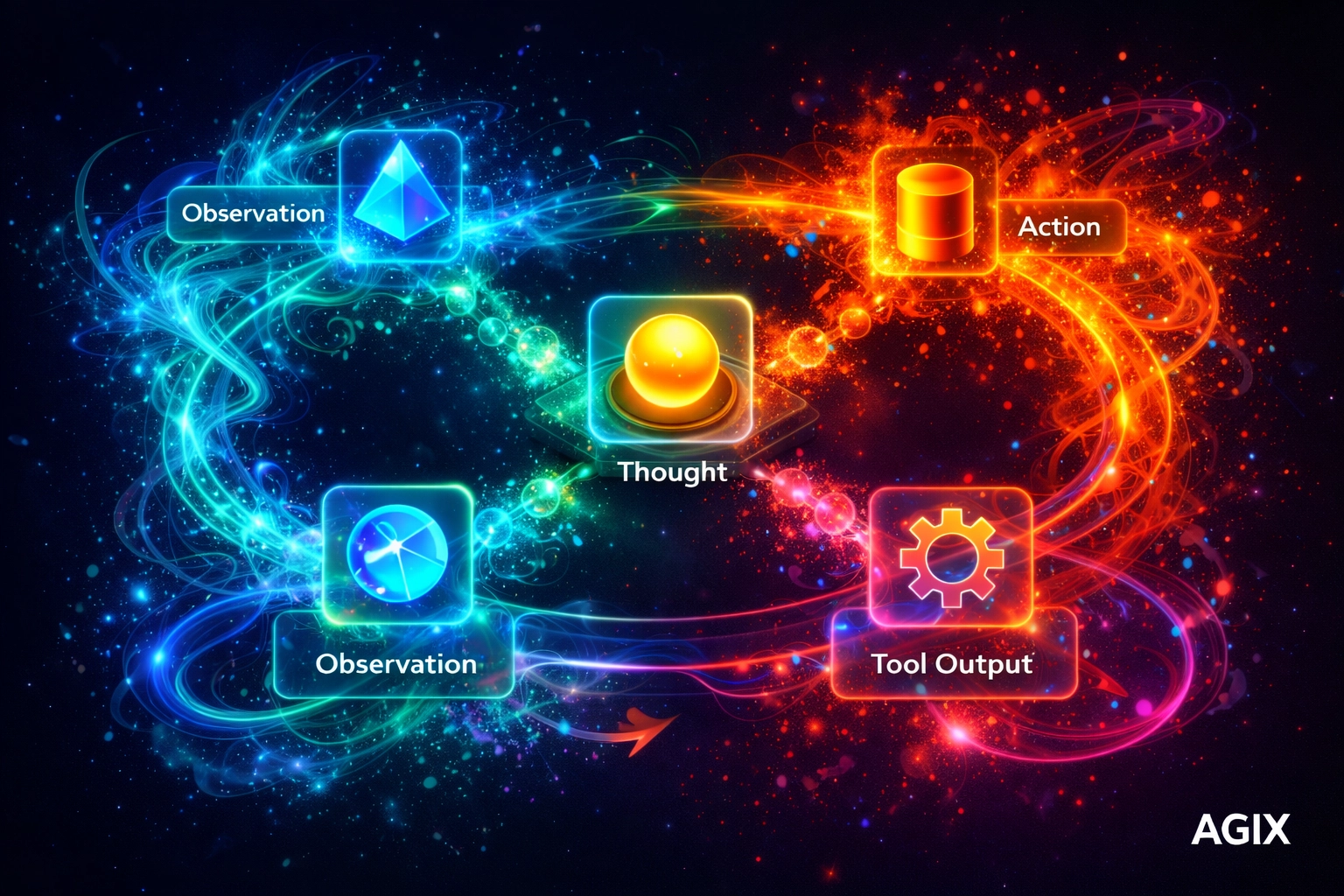

Caption: Technical visualization of a ReAct (Reason + Act) loop showing the iterative process of observation and decision-making.

3. Memory Systems: Short-Term vs. Long-Term

A “stateless” agent is a useless agent. To operate at an enterprise level, agents need state management.

- Short-term Memory: This is the context window. It stores the current conversation or task progress.

- Long-term Memory: Utilizing Vector Databases (like Pinecone or Milvus) and RAG (Retrieval-Augmented Generation). This allows the agent to remember a client’s preference from six months ago or refer back to a 500-page compliance manual.

4. The Action Layer: Tool Use and Execution

An agent without tools is just a philosopher. The action layer involves connecting the LLM to the “real world” via APIs, custom code execution, and AI automation workflows.

When we built solutions for HouseCanary, the “Action” wasn’t just generating a report; it was the programmatic interaction with real estate data layers to synthesize actionable insights.

Technical Reasoning Loops: How Agents “Think”

The magic of autonomy lies in the Reasoning Loop. The most common framework used in production today is ReAct (Reason + Act).

- Input: The agent receives a goal.

- Thought: The agent explains what it needs to do first.

- Action: The agent selects a tool (e.g., Google Search, SQL Query).

- Observation: The agent reads the output of that tool.

- Loop: The agent updates its “Thought” based on the “Observation” and repeats until the goal is met.

This loop is what separates conversational AI from true agentic intelligence.

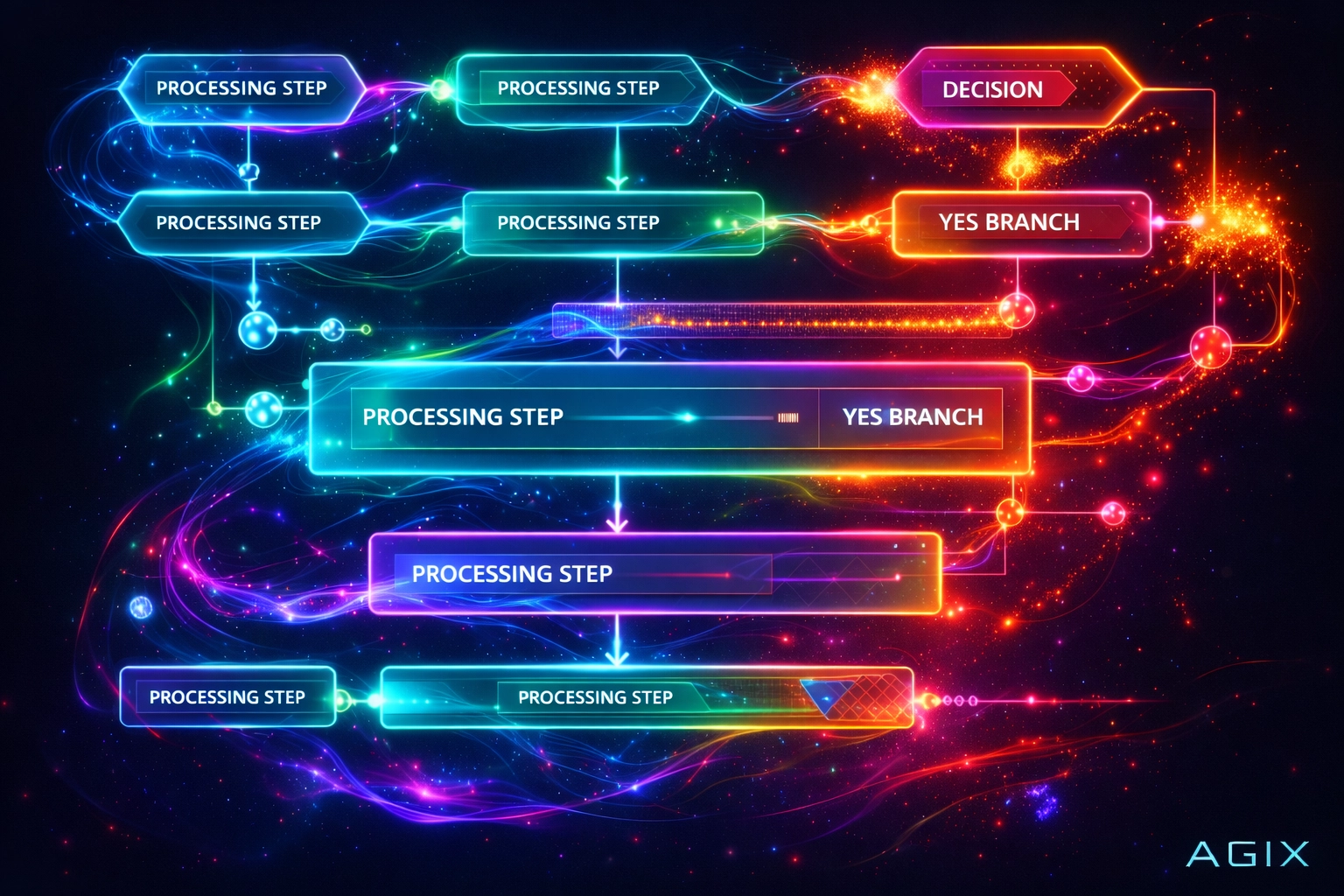

Caption: An operational intelligence flowchart showing how data flows through decision nodes in an agentic system.

Comparing Agent Frameworks: LangGraph vs. CrewAI vs. AutoGPT

Choosing the right “skeleton” for your agent’s anatomy is critical.

| Feature | AutoGPT | CrewAI | LangGraph |

|---|---|---|---|

| Primary Use | Experimental / Research | Role-based Multi-agent | Production / Cyclic Flows |

| Control | Low (prone to loops) | Medium | High (Fine-grained) |

| Complexity | Low | Medium | High |

| Best For | Testing ideas | Content/Marketing teams | Complex Enterprise Logic |

For a deeper dive into these frameworks, see our comprehensive agent framework comparison.

Engineering for Reliability: The Multi-Agent System (MAS)

Single agents often crumble under complex, multi-variable tasks. The solution? Multi-Agent Orchestration.

Instead of one “God-Agent,” we engineer a swarm of specialized agents:

- The Manager: Orchestrates the workflow and assigns tasks.

- The Researcher: Gathers data from predictive analytics tools.

- The Editor: Validates the output against brand guidelines.

- The Executor: Pushes the final data to the CRM.

This modularity is exactly how we scaled operations for Properti AI, allowing for parallel processing and significantly higher accuracy rates.

Caption: Step-by-step workflow of a multi-agent system executing business process automation.

LLM Access Paths: Implementing the Anatomy

How do you actually access these capabilities? Depending on your technical debt and security requirements, you have three primary paths:

- Direct API (OpenAI/Anthropic): The fastest way to build. High performance, but you are subject to their rate limits and privacy policies. Perfect for comparing Claude vs GPT vs Gemini in specific use cases.

- Orchestration Platforms (Poe, Perplexity): Good for simple research agents, but lacks the deep integration required for Fintech AI solutions.

- Local/Private Deployment (Ollama, vLLM): Necessary for high-compliance industries. You host the “Anatomy” on your own servers to ensure 100% data sovereignty.

Why Agentic Engineering Matters for Scaling

Scaling a company of 50 people to 200 often leads to “Operational Bloat.” You hire more people just to manage the people you already have.

Agentic Intelligence breaks this linear growth curve.

- 99% faster data processing in lead enrichment.

- 82% reduction in manual data entry for logistics.

- 24/7/365 operational uptime without “burnout.”

Real-World Systems. Proven Scale. This isn’t about the hype of a talking computer; it’s about building the automated infrastructure of the modern enterprise.

Frequently Asked Questions

1. What is the difference between a chatbot and an autonomous agent?

Ans. A chatbot is reactive and follows a linear script or prompt response. An autonomous agent is proactive; it can break down goals, choose its own tools, and self-correct without human intervention.

2. Can autonomous agents work with my existing CRM?

Ans. Yes. Through the Action Layer, agents can be engineered to read/write data to Salesforce, Hubspot, or any custom ERP via REST APIs.

3. Are autonomous agents secure?

Ans. Security depends on the engineering. At Agix, we implement “Human-in-the-loop” (HITL) checkpoints and sandboxed execution environments to ensure agents never perform unauthorized actions.

4. Do I need a massive GPU cluster to run these?

Ans. Not necessarily. Most enterprise agents leverage APIs from providers like OpenAI or Anthropic. Local deployments require hardware, but many “brain” functions can be handled efficiently via cloud-based orchestration.

5. What is “hallucination” in agents, and how do you fix it?

Ans. Hallucination is when an LLM generates false information. In agents, we mitigate this via Grounding: forcing the agent to retrieve facts from a Knowledge Base before speaking: and Verification Loops.

6. How much does it cost to build an autonomous agent?

Ans. Costs vary based on complexity, but the ROI typically focuses on “hours saved.” If an agent replaces 40 hours of manual data work per week, it usually pays for itself within 3-6 months.

7. Which LLM is best for agents?

Ans. Currently, GPT-4o and Claude 3.5 Sonnet are leaders due to their high “Reasoning” scores and ability to handle long context windows.

8. What are “tools” in the context of AI agents?

Ans. Tools are snippets of code or API endpoints that the agent can “call.” Examples include a calculator, a web search engine, or a Python interpreter.

9. Can agents collaborate with each other?

Ans. Yes. This is called a Multi-Agent System (MAS). One agent might research, while another writes, and a third audits for compliance.

10. How do I start implementing agentic AI in my business?

Ans. The best path is to identify a repetitive, high-volume process and map its “logic tree.” From there, you can build a POC (Proof of Concept) using frameworks like LangGraph. For expert assistance, visit our Agentic AI Services page.

Ready to Implement These Strategies?

Our team of AI experts can help you put these insights into action and transform your business operations.

Schedule a Consultation